The back story

Street exhibitions

A large part of our research into the history of Brighton & Hove has been to discover more about the North Laine area, once the industrial heart of the city.

To this end, volunteers have transcribed census records spanning 1851 to 1911 for twenty-four streets in the North Laine and have made a database of who lived in each house in the past.

This occupancy data enabled us to present street exhibitions, in the form of a printed poster attached to each house, listing all the people who had lived there since 1851.

This occupancy data enabled us to present street exhibitions, in the form of a printed poster attached to each house, listing all the people who had lived there since 1851.

These street exhibitions proved very popular with the public.

We next began to think about how to build on this by using the raw facts and figures as a gateway to an engaging and immersive experience based on the lives and times of people who lived in Brighton all those years ago.

Enter the science

While pondering our next move we were introduced to Dr Simon Julier of University College London.

Then a Senior Researcher in the Department of Computer Science, Simon was looking for a heritage site that could make practical use of the 3D tracking, pattern recognition and mobile software that he and his colleagues were working on.

Clearly, a mobile system that was aware of its location and could recognize a particular building and track the space in 3D offered some fantastic possibilities.

The question became: how to use all this science to tell our stories?

The thing we call VisAge

After a couple of false starts we hit on an idea best described as a combined X-Ray camera and time machine; something that could look inside a building, visualise the occupants at different times in history and enable users to listen to their stories.

This is the first design sketch. It was made in January 2013 and incorporates the basic features of what we would later called VisAge, (a name hewn roughly from visualize data from ages past).

VisAge would combine the sciences into an augmented reality: what you see today, with a layer of yesterday on top.

The aim was for UCL to work on the programming and other technical challenges, while we would write stories based on the occupancy data and then turn these into audio recordings.

VisAge version 1

This would be a prototype; something that could be built quickly, loaded onto a tablet computer, then tested in public trials to gather feedback.

We drew up a list of things it should be able to do:

- Recognize the façade of a specific building

- Behind that façade render the internal space in 3D

- Within the space place images to represent occupants

- Link each occupant image to an audio narrative file

- Provide a control to move between time periods

The images below shows one of the many visits to Brighton made by the team from UCL to test the software ‘in the wild’. Here you see, from left to right, Town House intern Alex Kewn and UCL's Simon Julier and Seb Axelsen.

Below, in this view over Simon's shoulder it is possible to see on the screen the 3D rendered space with the internal staircase and one of the figures visible.

Note: the choice of ‘test house’, (the one with the pink door), was deliberate. With just one storey above eye level and one below it does not require much tilting of the screen to visualise the spaces inside and the double yellow lines make it less likely that parked cars will block the view.

While UCL got on with the coding side of the project we began work on preparing the content.

As followers of popular sci-fi well know, any proper time machine needs some way of selecting a date to travel back to, so we asked UCL to include a date chooser in the interface. To test this, we made content for both 1851 and 1901, thinking also that a gap of fifty years might also enable users to see a difference in the costume and environment presented on the screen.

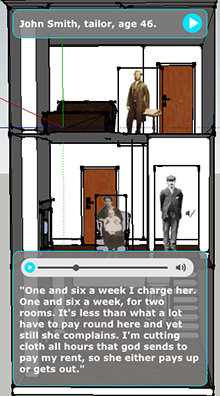

For each date, a pair of very short narratives was prepared, each representing two different views of the same situation: in 1851, a man is annoyed with his lodger for not paying the rent, while she counters by saying that the outside privy doesn’t work; in 1901, a man complains about how poor everyone is, while his son wonders what the future holds.

These stories were recorded and edited to make four audio files, images of four people of the approximate age and period were found to use as screen icons and the whole package was then sent to the programmers at UCL for coding into the software.

You can listen to the recordings here.

1851 George Wall, a tailor, aged 49

1851 Mrs Kent, a dressmaker, aged 42

1901 Harry Jones - a shoemaker, aged 35

1901 Peter Jones - Harry's son, aged 12

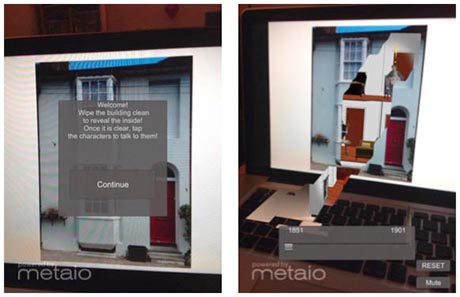

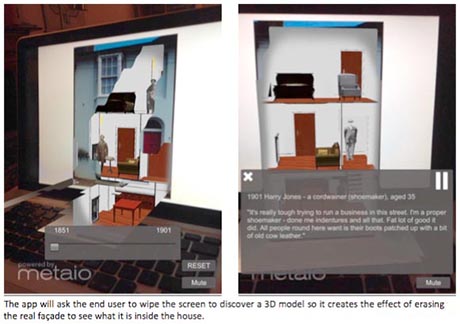

The augmented reality software developed by UCL uses the camera in a tablet computer to send a video feed to the software which is able to recognize the previously-stored façade of a building. The touch-screen interface enables this façade to be visually ‘wiped away’ to reveal rendered 3D ‘rooms’ mapped to the spaces behind it.

A slider control enables the 1851 or 1901 to be selected, each position revealing two different character icons from that period. Clicking on one of these icons plays the linked audio file. To improve accessibility the transcript of the audio file is also written to the screen.

The system was tested in September 2013 and met with an extremely positive response.

Download feedback here: VisAge v1 test data (37KB pdf)

We made a leaflet to explain the project to the public.

Download here: side 1, Background to VisAge (692KB pdf)

Download here: side 2, How VisAge works (956KB pdf)

VisAge version 2

Following the proof-of-concept tests with VisAge v1, it was clear that there were three areas that needed to be improved.

1. The system should have a back-end authoring system to enable users to make and edit stories for themselves, without the need for any programming skills.

2. The system should be distributable, either as a downloadable app or as a mobile website.

3. An interface is required to guide users to places of interest and enable them to interact with what they find there.

Development of VisAge continued intermittently through 2014 as other projects require our attention, but a new prototype was beginning to emerge. By the end of the year, UCL had produced a complete end-to-end wireframe of the application and made working interfaces for authoring and mobile users.

Here are a series of screenshots of the user interface.

In early 2015, UCL brought the prototype to Brighton to upload some sample content and test.

For content, we had photographed the houses of some illustrious neighbours memorialized with a blue plaque, together with materials that relate to their lives - a musical composition, a painting of a ship in battle, an artwork, and so on.

After deploying the software on a variety of devices and uploading the image and audio assets we set off with a group of volunteers to beta-test the application in the wild. Well, as wild as it gets in Brunswick Square in March.

That's us in the picture below - a group of volunteers from the Town House try to break the application, while the two Anas from UCL take notes on the left.

Encouraged by the test results, development continued with the target of a public user test at the upcoming Open Days event in Brighton, in September 2016.

The September test session was recorded on film by Matti Pagura and you can see me and others talking about it here:

Tuesday October 19th, 2016. The story to be continued.

VisAge is a collaborative project: researchers and students at University College London designed the science behind VisAge, volunteers from The Regency Town House provided the cultural heritage content.

University College London

Simon Julier

Sebastian Axelsen

Andras Takas

Will Steptoe

Ana Moutinho

Ava Fatah gen. Schieck

Aitor Rovira

Petros Koutsolampros

Efstathia Kostopoulou

Ana Jarvornik

S.Julier@ucl.ac.uk

Thanks to Mattia Pagura for his film-making skills.

The Regency Town House

Volunteers and students

Phil Blume

Nick Tyson

e: phil@rth.org.uk